Building a camera that sees 360 degrees in 3D

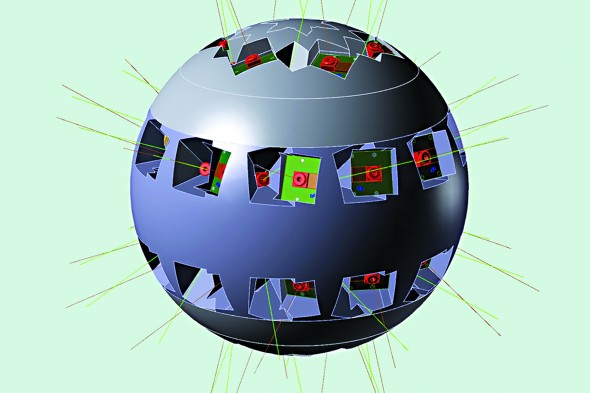

UIC’s Electronic Visualization Laboratory won a $3 million grant to build a camera that can capture images in 360 degrees. Illustration — Greg Dawe, UC San Diego

Many defining moments in scientific progress follow the introduction of new ways to observe the world, from microscopes and telescopes to X-rays and MRIs.

The Electronic Visualization Laboratory received a $3 million National Science Foundation grant to develop an extraordinary new camera — really, an array of dozens of video cameras — that can capture images in 360 degrees and three dimensions.

Imagine the difference between seeing a fish dart through the narrow field of an underwater camera and seeing the fish in its surroundings.

“We see a fish whip by, but you have no idea why. What might be chasing it? It’s a very myopic view,” said Daniel Sandin, co-founder of EVL who worked on the development of the Sensor Environment Imaging (SENSEI) Instrument project.

SENSEI will show you what’s chasing the fish, how big the predator is and how far away it was when it spooked that fish.

The device will capture the whole sphere of the surrounding environment, in stereo with calibrated depth, providing information on how far away objects are and allowing estimates of their size and mass — all with true colors and brightness, Sandin said.

EVL has worked before with multiple images to create a single complete 360-degree panoramic image, like the display of Medinet Habu — the mortuary temple of Ramses III in Egypt — in the projected virtual reality of the CAVE2.

To create a composite view of multiple images, “you have to stitch together pictures that overlap and are taken at different angles,” said Maxine Brown, director of EVL and principal investigator for SENSEI.

But SENSEI is video, she said, “which means not one picture, but 30 per second, in stereo and from multiple cameras, not only set at different angles but sitting at different points.”

The cellphone market has led to improvements in miniaturization and resolution-in-camera technology that combine with EVL’s strength in visualization of big data and computer networks to make the project doable, Brown said.

Researchers involved in the project include oceanographers who study coral reefs and kelp forests; computer scientists who track social networking in animals; astronomers looking at the night sky from a high-altitude balloon and telescopes around the world; archeologists interested in cultural heritage; and public health experts involved in emergency preparedness.

UIC computer science researchers Robert Kenyon, Andrew Johnson and Tanya Berger-Wolf are co-principal investigators on the grant.

Researchers at four other institutions contributing to the project are Truong Nguyen, University of California, San Diego; Jason Leigh, University of Hawaiʻi at Mānoa; Jules Jaffe, Scripps Institution of Oceanography; and François Modave, Jackson State University.